Development teams are increasingly choosing a microservices architecture over monolithic structures in order to boost apps' agility, scalability, and maintainability. With this decision to switch to the modular software architecture — in which each service is a separate unit with its own logic and database that communicates with other units through APIs — comes the need for new testing strategies and new testing tools.

Testing microservices is a key part of the microservices application process: you need to ensure that your code doesn’t break within the unit, that the dependencies in the microservices continue to work (and work quickly), and that your APIs meet the defined contracts. This part, the testing, debugging, and maintaining of microservices, is often the most difficult but important part of working with a microservices architecture. Still, because many microservices are built with a continuous delivery model to consistently build and deploy features, developers and DevOps teams need accurate and reliable testing strategies to be confident in these features.

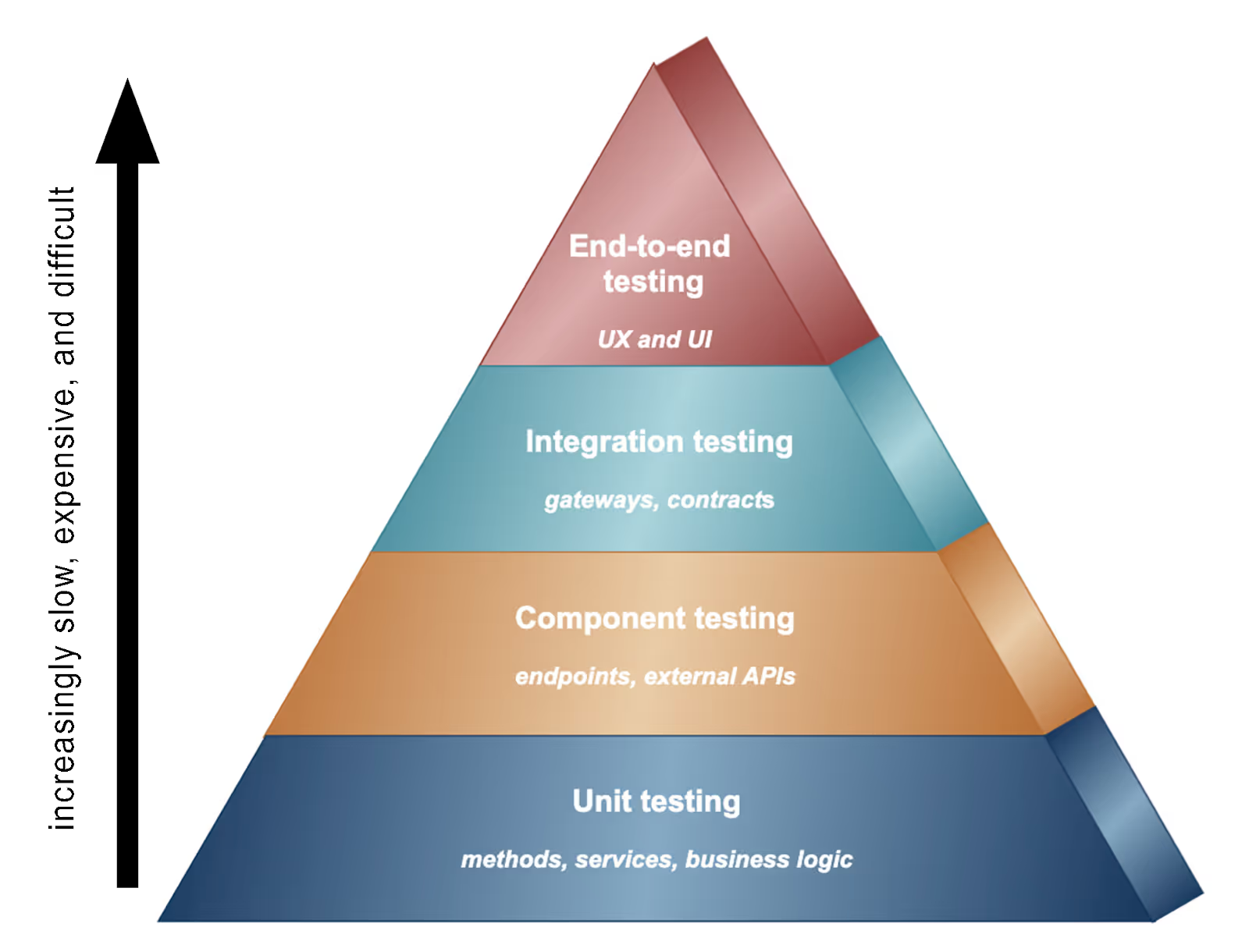

So which different types of tests exist for microservices, how can they work for different areas of your software, and what are their benefits? The well-known "testing pyramid" can provide a testing framework for approaching these tests. According to Martin Fowler, a well known author on software engineering principles, “The ‘Test Pyramid’ is a metaphor that tells us to group software tests into buckets of different granularity."

The different layers of the pyramid are defined as:

Unit tests: Test a small part of a service, such as a class

Component tests: Verify the behavior of an individual service

Integration tests: Verify that a service can interact with infrastructure services, such as databases and other application services, by testing the service’s adapters

End-to-end tests: verify the behavior of the entire application

**Note: some versions of the testing pyramid switch the order of component tests and integration tests

Combining multiple microservices testing strategies leads to high test coverage and confidence in your software, while also making overall maintenance easier.

Unit Testing

The goal of unit testing is to ensure that the smallest portion of a service performs as expected, within the specification that has been decided upon during the microservices design phase. Because microservices break down application functionality into hundreds of small testable functionality components, unit testing treats each one individually and independently. It’s best practice to unit test on the level of a class or that of a group of related classes.

Unit testing can cut off all dependencies of a component by using test doubles such as fakes, stubs, mocks, dummies, and spies. For example, testers can mock the responses for your dependencies and "assume they do [X]" where [X] is the correct response, a failure response, etc.

Component Testing

Component testing verifies that a given service is functioning correctly. With the scope limited to a portion of the entire microservices architecture, component testing checks the end-to-end functionality of a chosen microservice (which can be made up of a few classes) by isolating the service within the system, replacing its dependencies with test doubles and/or mock services.

You can create test environments for each component that will be divided into test cases. It might involve testing resource behavior, for example, such as performance testing, determining memory leaks, structural testing, etc.

Integration testing

Integration testing validates that independently developed components/microservices work correctly when they are connected. It tests the communication paths and interactions between components and finds errors.

Integration tests become more difficult and time-consuming to write and run. Therefore, having excellent production QA (Quality Assurance) practices will help ensure this goes smoothly.

Contract testing

It’s crucial to call out contract testing in the testing pyramid. Contact testing checks the compatibility of separate units (like two microservices), by making sure they can communicate with each other. Contract testing tests that APIs work, which is the way that microservices interact with each other.

Contract testing checks these microservices’ boundaries and interactions and stores them in a contract, which can then be used as a standard for how both parties interact in the future. It requires both parties to agree on the allowed set of interactions and allows for evolution over time.

End-to-end testing

End-to-end testing (E2E testing) is the final testing stage that involves testing an application's workflow from beginning to end, for complete user journeys.

These tests can be automated, but E2E testing is only done for ultra-critical flows. It doesn’t scale well in a microservices architecture, since it requires you to spin up many microservices and wire them up, which is difficult to automate and maintain. As a result, it’s reserved for testing only critical interactions between specific microservices.

Testing tools

Developers and QA teams have different preferences for microservices testing tools, especially for these different types of tests. Here’s a rundown of some popular ones. Many are on-demand staging environments, which are created dynamically, triggered by a CI/CD pipeline. With on-demand staging, once a developer is done with the staging environment, the staging environment is destroyed, along with any configuration, environment, or installation inconsistency.

This platform is an on-demand staging environment, with accessible sharing capabilities for collaboration. You can connect your applications' repositories to Release, which then, creates ephemeral environments with every pull request and updates with each code push. Additionally, environments can be created for integration, traditional staging, or QA/UAT use cases. Developers and QA have full access to environments to test and debug, and product teams, design teams, and stakeholders can see features evolving, and provide feedback early and often.

WebApp.io (formerly LayerCI)

WebApp.io is a code review automation platform that allows for on-demand review environments for full-stack web applications. You can create custom pull requests. And once you’ve created one copy of your stack, you can duplicate it instantly to automatically run e2e tests and integrate in CI/CD workflows. WebApp will automatically annotate your pull request in GitHub, GitLab, or BitBucket.

Vercel is a cloud platform for frontend frameworks, Serverless Functions, and static sites, built to integrate with pre-existing content and databases. It hosts websites and web services that deploy instantly, scale automatically, and requires no supervision, all with no configuration. It’s similar to Amazon Web Services (AWS) Lambda or Netlify. It also provides edge-location hosting and caching.

Pact is a code-first consumer-driven contract testing tool for developers and testers who code. It tests HTTP and message integrations using contract tests — the way to verify that inter-application messages conform to a shared understanding, documented in a contract. This way of contract testing cuts down on large unit testing.

Apache JMeter is a commonly used Java-based performance test tool for testers. It is available as an open-source platform, and it can be used as a load testing tool for analyzing and measuring the performance of web applications.

Hoverfly is an automated, open-source API communication simulation tool for specifically integration tests. Users can test how APIs react in scenarios such as rate limits and/or latency in-network.

Grafana offers free metric visualization and analytics. The dashboard lets developers see time series data to watch how microservices respond in real-time traffic.

Gatling is a tool for load testing that is written in Scala. It can run simulations on multiple platforms, and then reports on metrics such as active user numbers and response times.