Drive alignment to your ideal state

Define clear engineering standards and track team performance with the deepest, most customizable, and easiest-to-use Scorecards on the market. Drive accountability, improve quality, and accelerate initiatives.

"Scorecards were powerful for us right out of the box. They immediately helped us identify abandoned codebases, which allowed us to have much more strategic conversations about the relevance of older projects. It also gave us a concrete way to track the adoption of our 'paved road' standards across the organization."

Minh Pham

Strategic Advisor to the SVP of Data, Engineering, and Operations

Getting Started

Cortex Scorecards make it simple to define standards, see how you’re tracking, and drive a culture of continuous improvement.

Define

Set clear rules and standards for operational maturity, reliability, security, and more.

Assess

See where your teams and services stand today, and identify gaps automatically.

Improve

Prioritize what to fix, set deadlines with initiatives, and repeat for ongoing improvement.

Gamify quality across teams

Turn best practices into friendly competition with team leaderboards and score comparisons. Motivate teams to raise their scores and celebrate wins together.

Meet targets for key initiatives

Transform standards into real outcomes by setting clear goals and target dates for critical initiatives. Cortex assigns owners, tracks progress, and keeps teams accountable so improvements happen on your timelines.

Track progress and report with confidence

See exactly how teams and services are performing against your standards over time. Progress Reports deliver clear insights to measure improvements, spot risks, and demonstrate results.

Example Scorecards

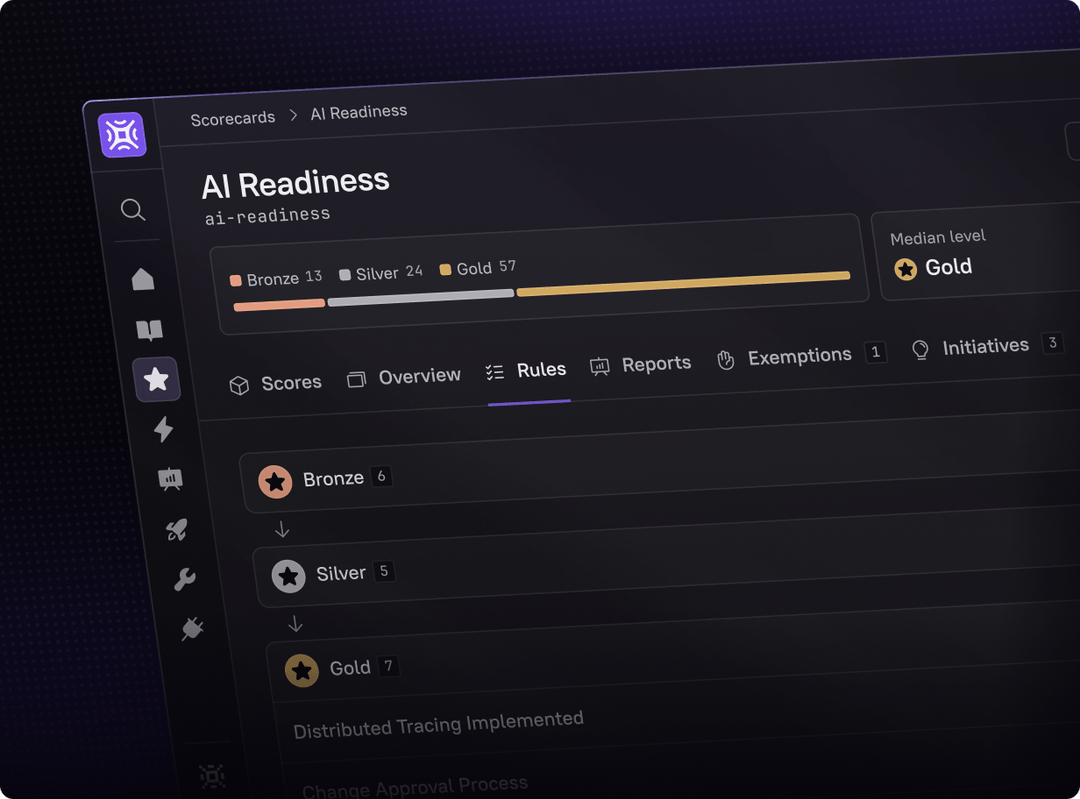

Build any Scorecard in minutes – from production readiness to security compliance to AI maturity. With data pulled automatically from your existing tools, it’s easy to define standards, track progress, and drive improvement across teams.

Establish the foundations for safe AI adoption with strong testing, clear ownership, and secure CI/CD processes. Use Cortex to enforce readiness before rolling out AI tools.

Insights and case studies

Subscribe to our blog and be the first to know about the latest updates, features in Cortex.